Ultimate Commander Windows Port

I made a demo for Vilmos Bilicki, my university mentor, regarding the Ultimate Commander, my master’s thesis project. He was enthusiastic and told me he’d use UC if it ran on Windows. I had been thinking about the port before, but he pushed me, so I did it this time.

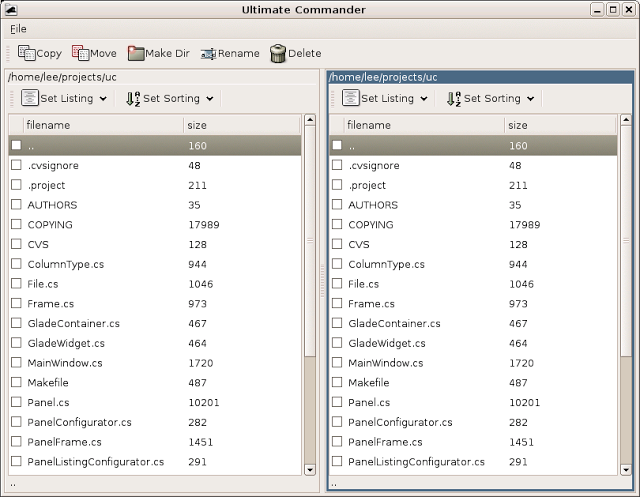

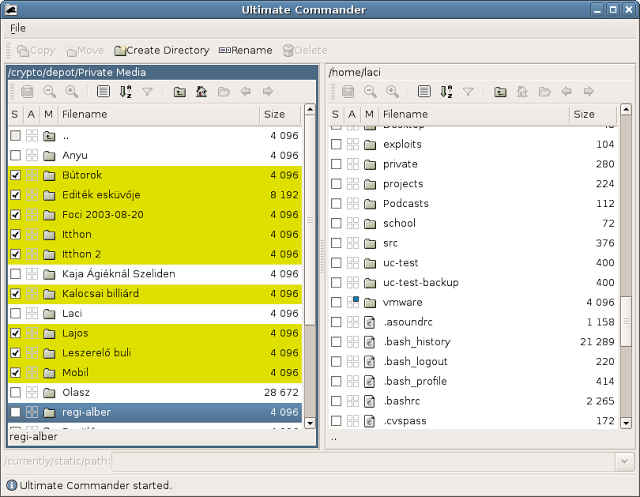

I had a talk with Francisco T. Martinez a la Paco on #Mono. He directed me to his experimental Windows Mono Installer, which incorporates the Gnome bindings, not just Gtk# as the official installer. Using his installer, it was easy to port UC to Windows. I’ve not entirely ported it, to be honest; just made the GUI work, but it’s nice to see it appear on Windows:

I’ll try to maintain the Windows port. I think it’s worth the additional maintenance burden, which is not too heavy with Mono.